Llama 3 Prompt Template

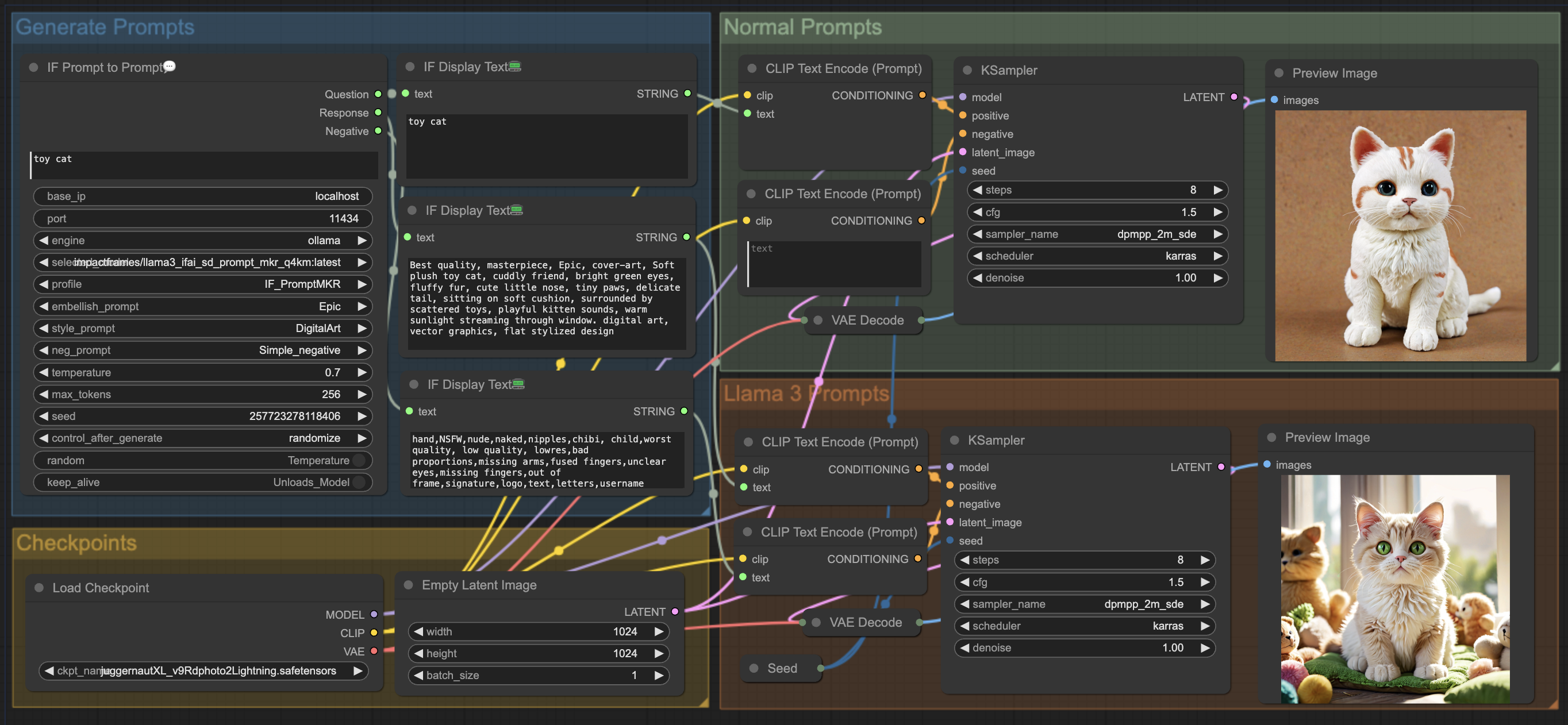

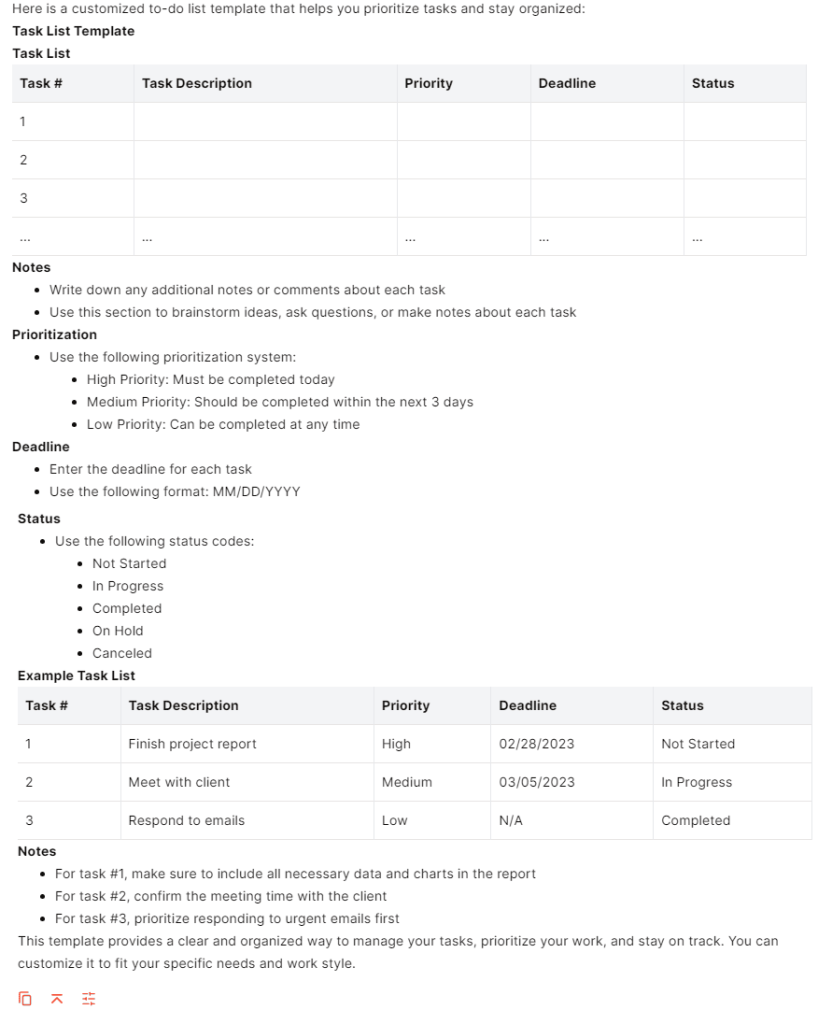

Llama 3 Prompt Template - For many cases where an application is using a hugging face (hf) variant of the llama 3 model, the upgrade path to llama 3.1 should be straightforward. This is the current template that works for the other llms i am using. The following prompts provide an example of how custom tools can be called from the output. (system, given an input question, convert it. When you receive a tool call response, use the output to format an answer to the orginal. Llama 3.1 prompts are the inputs you provide to the llama 3.1 model to elicit specific responses. Learn best practices for prompting and selecting among meta llama 2 & 3 models. This page covers capabilities and guidance specific to the models released with llama 3.2: Interact with meta llama 2 chat, code llama, and llama guard models. Llama 3 template — special tokens. (system, given an input question, convert it. These prompts can be questions, statements, or commands that instruct the model on what. The llama 3.1 and llama 3.2 prompt. When you receive a tool call response, use the output to format an answer to the orginal. Interact with meta llama 2 chat, code llama, and llama guard models. Following this prompt, llama 3 completes it by generating the { {assistant_message}}. Llama 3 template — special tokens. Ai is the new electricity and will. The llama 3.2 quantized models (1b/3b), the llama 3.2 lightweight models (1b/3b) and the llama. It's important to note that the model itself does not execute the calls; The llama 3.2 quantized models (1b/3b), the llama 3.2 lightweight models (1b/3b) and the llama. However i want to get this system working with a llama3. Following this prompt, llama 3 completes it by generating the { {assistant_message}}. Explicitly apply llama 3.1 prompt template using the model tokenizer this example is based on the model card from the meta documentation. The llama 3.2 quantized models (1b/3b), the llama 3.2 lightweight models (1b/3b) and the llama. Llama models can now output custom tool calls from a single message to allow easier tool calling. Llama 3.1 nemoguard 8b topiccontrol nim performs input moderation, such as ensuring that the user prompt is consistent with rules specified as part of the system prompt. The. Llama 3.1 nemoguard 8b topiccontrol nim performs input moderation, such as ensuring that the user prompt is consistent with rules specified as part of the system prompt. Changes to the prompt format. Llama 3 template — special tokens. They are useful for making personalized bots or integrating llama 3 into. Llama models can now output custom tool calls from a. The llama 3.1 and llama 3.2 prompt. For many cases where an application is using a hugging face (hf) variant of the llama 3 model, the upgrade path to llama 3.1 should be straightforward. These prompts can be questions, statements, or commands that instruct the model on what. This can be used as a template to. Explicitly apply llama 3.1. Explicitly apply llama 3.1 prompt template using the model tokenizer this example is based on the model card from the meta documentation and some tutorials which. Interact with meta llama 2 chat, code llama, and llama guard models. It signals the end of the { {assistant_message}} by generating the <|eot_id|>. The llama 3.1 and llama 3.2 prompt. When you receive. Llama 3.1 prompts are the inputs you provide to the llama 3.1 model to elicit specific responses. The llama 3.1 and llama 3.2 prompt. Llama models can now output custom tool calls from a single message to allow easier tool calling. Changes to the prompt format. When you receive a tool call response, use the output to format an answer. Ai is the new electricity and will. Learn best practices for prompting and selecting among meta llama 2 & 3 models. When you're trying a new model, it's a good idea to review the model card on hugging face to understand what (if any) system prompt template it uses. Explicitly apply llama 3.1 prompt template using the model tokenizer this. Following this prompt, llama 3 completes it by generating the { {assistant_message}}. The following prompts provide an example of how custom tools can be called from the output of the model. When you receive a tool call response, use the output to format an answer to the orginal. The llama 3.2 quantized models (1b/3b), the llama 3.2 lightweight models (1b/3b). Llama 3 template — special tokens. For many cases where an application is using a hugging face (hf) variant of the llama 3 model, the upgrade path to llama 3.1 should be straightforward. This can be used as a template to. Learn best practices for prompting and selecting among meta llama 2 & 3 models. Llama 3.1 nemoguard 8b topiccontrol. Llama 3.1 nemoguard 8b topiccontrol nim performs input moderation, such as ensuring that the user prompt is consistent with rules specified as part of the system prompt. For many cases where an application is using a hugging face (hf) variant of the llama 3 model, the upgrade path to llama 3.1 should be straightforward. (system, given an input question, convert. This is the current template that works for the other llms i am using. The llama 3.1 and llama 3.2 prompt. This can be used as a template to. It signals the end of the { {assistant_message}} by generating the <|eot_id|>. It's important to note that the model itself does not execute the calls; Explicitly apply llama 3.1 prompt template using the model tokenizer this example is based on the model card from the meta documentation and some tutorials which. Ai is the new electricity and will. The following prompts provide an example of how custom tools can be called from the output. However i want to get this system working with a llama3. When you receive a tool call response, use the output to format an answer to the orginal. This page covers capabilities and guidance specific to the models released with llama 3.2: Following this prompt, llama 3 completes it by generating the { {assistant_message}}. These prompts can be questions, statements, or commands that instruct the model on what. Llama 3 template — special tokens. For many cases where an application is using a hugging face (hf) variant of the llama 3 model, the upgrade path to llama 3.1 should be straightforward. The llama 3.2 quantized models (1b/3b), the llama 3.2 lightweight models (1b/3b) and the llama.· Prompt Template example

Llama 3 Prompt Template Printable Word Searches

Llama 3 Prompt Template

Write Llama 3 prompts like a pro Cognitive Class

使用 Llama 3 來生成 Prompts

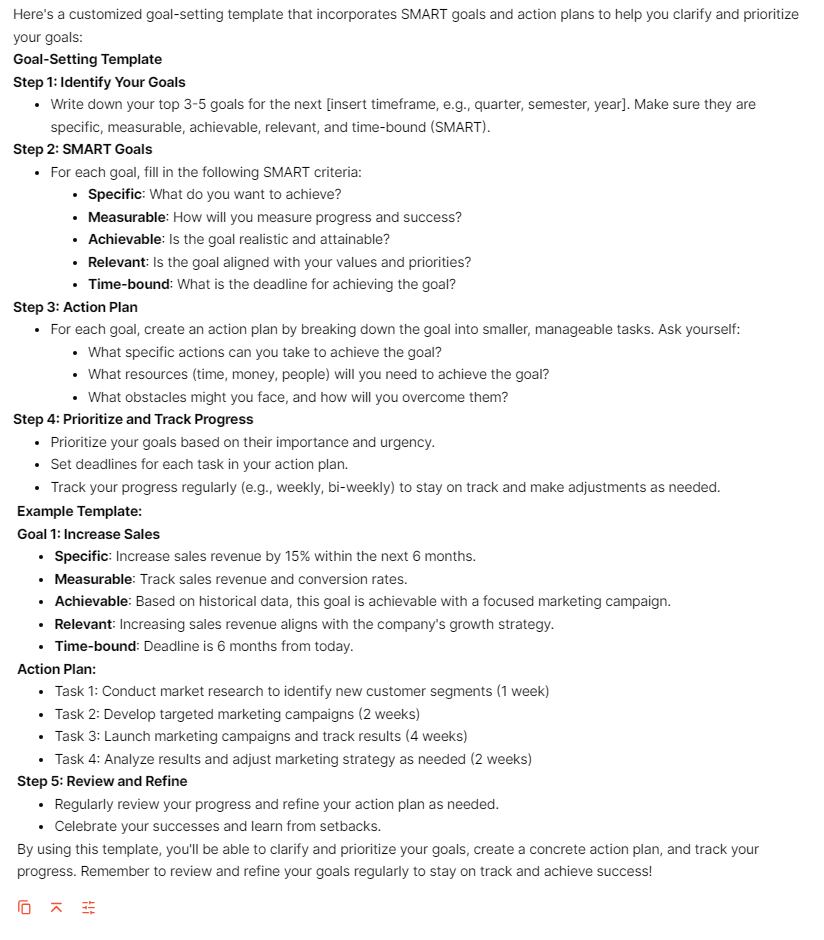

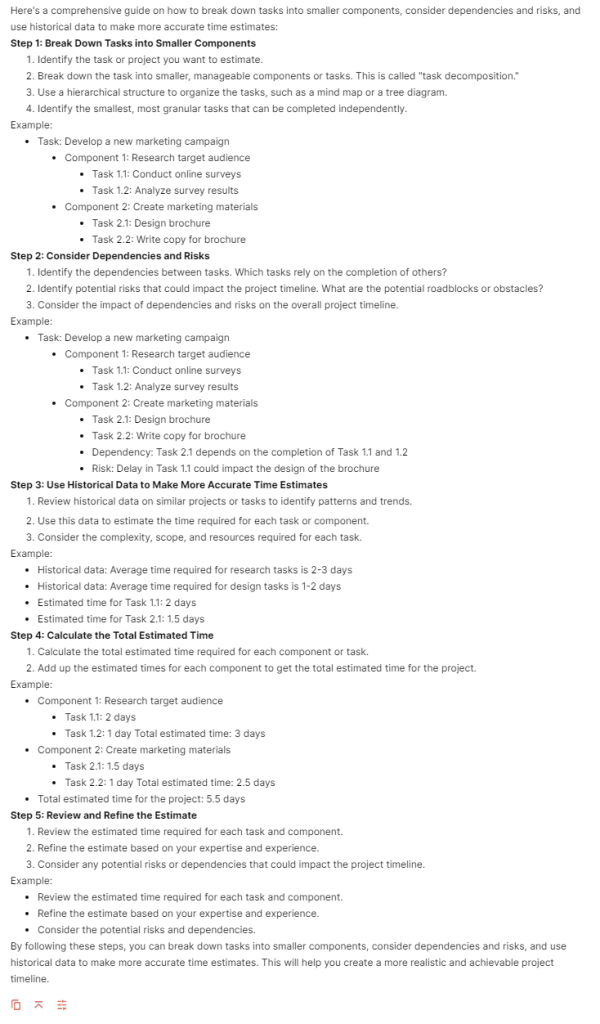

Try These 20 Llama 3 Prompts & Boost Your Productivity At Work

Try These 20 Llama 3 Prompts & Boost Your Productivity At Work

rag_gt_prompt_template.jinja · AgentPublic/llama3instruct

Try These 20 Llama 3 Prompts & Boost Your Productivity At Work

metallama/MetaLlama38BInstruct · What is the conversation template?

(System, Given An Input Question, Convert It.

When You're Trying A New Model, It's A Good Idea To Review The Model Card On Hugging Face To Understand What (If Any) System Prompt Template It Uses.

Changes To The Prompt Format.

They Are Useful For Making Personalized Bots Or Integrating Llama 3 Into.

Related Post: